We honor the service of all health care workers. Here are just a few of the women who have shaped American health history and places associated with them.

-

Dr. Virginia Alexander

Dr. Virginia AlexanderDr. Virginia M. Alexander was a pioneering Black doctor and public health expert who studied racism in the healthcare system.

-

Cora Reynolds Anderson

Cora Reynolds AndersonThe first Native American woman to serve in a state legislature, Anderson championed public health.

-

Dr. Elizabeth Blackwell

Dr. Elizabeth BlackwellDr. Blackwell was the first woman in the US to earn a medical degree.

-

Dr. Margaret "Mom" Chung

Dr. Margaret "Mom" ChungDr. Margaret “Mom” Chung was the first Chinese American woman to become a physician.

-

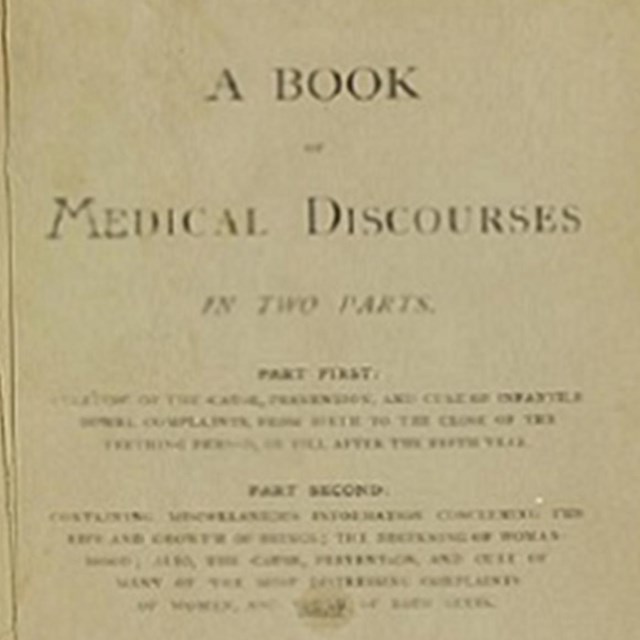

Dr. Rebecca Lee Crumpler

Dr. Rebecca Lee CrumplerDr. Crumpler was the first Black woman to earn a medical degree in the US.

-

Dr. Marie Equi

Dr. Marie EquiDr. Equi was a physician and activist who focused on caring for poor and working-class patients in the American West.

-

Dr. Alice Hamilton

Dr. Alice HamiltonDr. Hamilton was a pioneer in industrial health and worker safety.

-

Dr. Susan La Flesche Picotte

Dr. Susan La Flesche PicotteDr. Susan La Flesche Picotte was the first American Indian to receive a medical degree.

-

Orlean Hawks Puckett

Orlean Hawks PuckettPuckett was a midwife in Appalachia who served her community for years.

-

Dr. Helen Rodríguez Trías

Dr. Helen Rodríguez TríasDr. Helen Rodríguez Trías was a public health expert and women’s rights activist.

-

Dr. Mary Edwards Walker

Dr. Mary Edwards WalkerDr. Mary Walker was a physician, women's suffrage advocate, Civil War veteran, and the only woman to receive the US Medal of Honor.

-

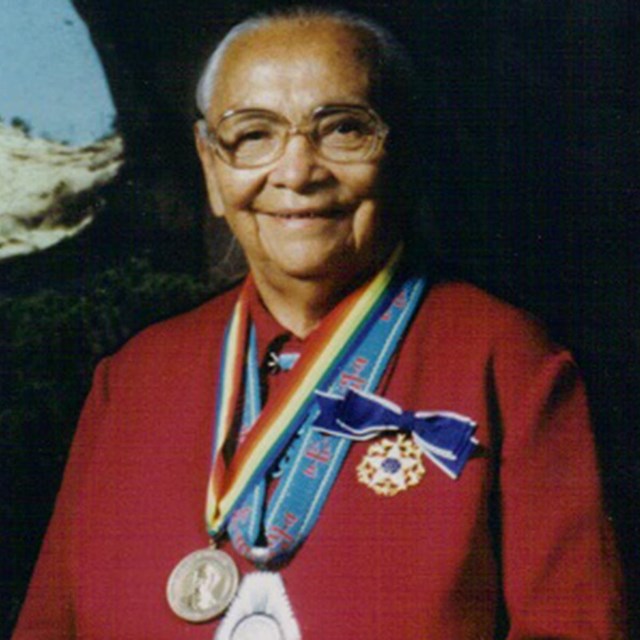

Annie Dodge Wauneka

Annie Dodge WaunekaA member of the Diné (Navajo) Nation, Annie Dodge Wauneka was a public health professional who served her community.

More Women of Public Health and Medicine

Associated Places

-

Clara Barton Homestead, Oxford, MA

Clara Barton Homestead, Oxford, MAClara Barton grew up in this house. She went on to found the American Red Cross.

-

Blackwell's Island, New York City

Blackwell's Island, New York CityNelly Bly spent time as an inmate at the asylum here and wrote about it. It changed how people were treated. Now known as Governor's Island.

-

Kalaupapa Peninsula, Hawaii

Kalaupapa Peninsula, HawaiiThe peninsula was the site of a leper colony during the late 1800s and early 1900s. Leprosy is now called Hansen's Disease.

Last updated: July 6, 2021